BimanGrasp — Bimanual Grasp Synthesis for Dexterous Robot Hands

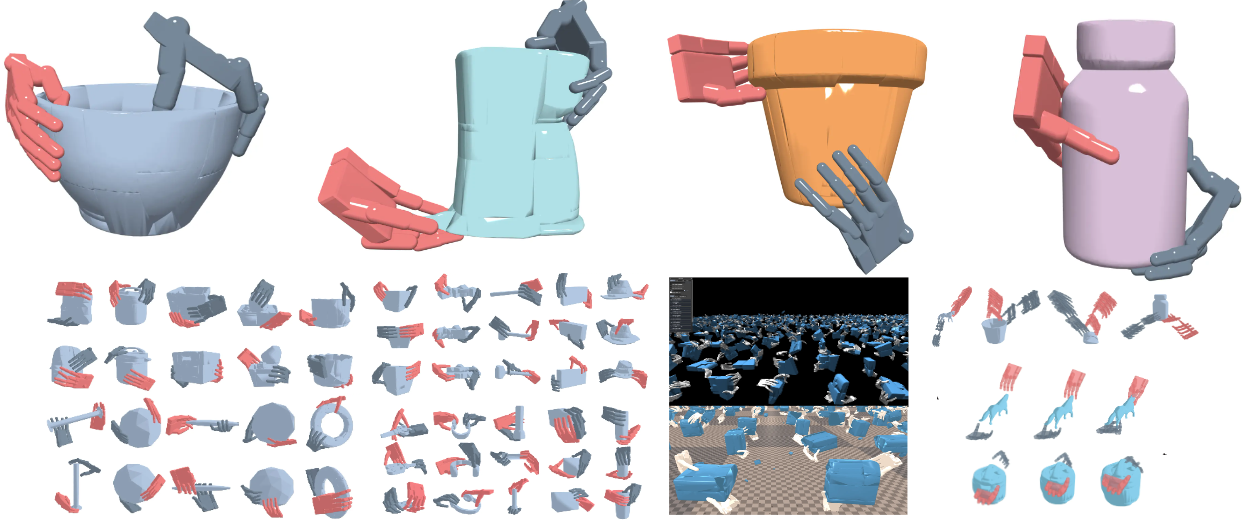

Physics-verified bimanual grasp synthesis for dexterous hands, with a 150K-scale dataset built over 900 everyday objects.

BimanGrasp treats bimanual grasp synthesis as a physics-first problem. Instead of guessing poses from appearance alone, it searches in the joint space of two dexterous hands, checks penetration explicitly, and keeps only grasps that survive simulation.

Large or torque-heavy objects often need two coordinated contacts; BimanGrasp targets exactly this regime instead of reducing the problem to two independent single-hand grasps.

Problem setting

Many daily objects are awkward for one hand but routine for two: basins, mugs with large handles, kitchen containers, light home appliances, and objects whose mass produces large torques. Existing dexterous grasp pipelines mostly optimize one hand at a time, which misses the coordination structure that makes these objects manageable.

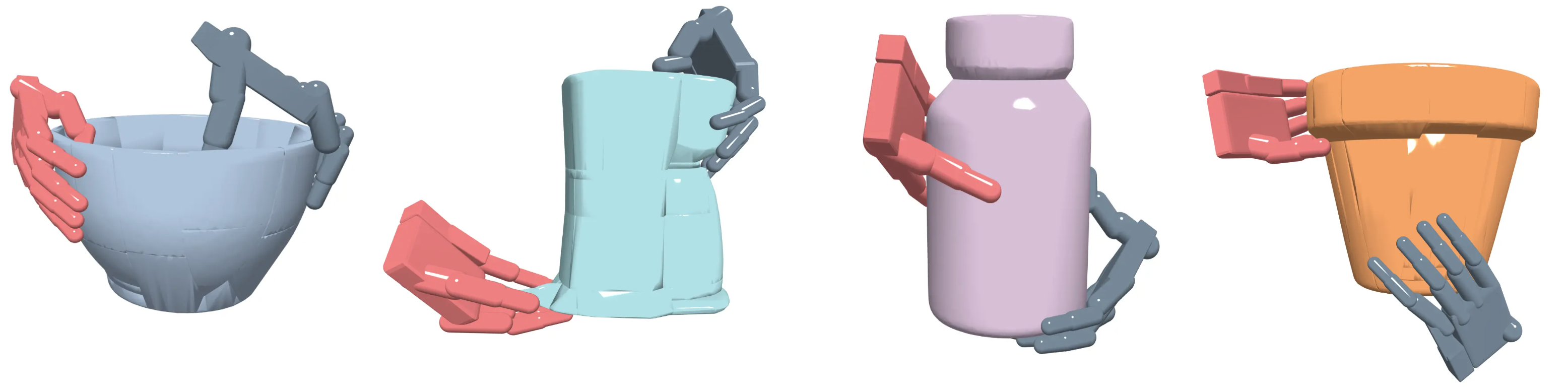

BimanGrasp addresses that gap with a three-stage pipeline:

- initialize two hands around the object with symmetric, human-like approach poses;

- optimize both hands jointly with an energy that mixes stability and feasibility;

- verify each candidate in Isaac Gym, then reuse the verified set to train a fast diffusion generator.

The result is both an offline synthesis algorithm and a reusable dataset for learning-based generation.

Optimization objective

The paper formulates grasp search as joint optimization over two hand poses and two hand configurations:

This decomposition is clean and useful:

- pulls fingers and palms toward the object surface.

- encourages force closure through contact geometry.

- keeps the wrench matrix well-conditioned, so the grasp can resist disturbances from more than one direction.

- suppress hand-object, self, and inter-hand penetrations.

- keeps all 44 finger joints inside valid limits.

The optimizer is MALA rather than plain gradient descent. That detail matters here: the search space is high-dimensional, non-convex, and full of symmetric local minima.

The full pipeline has a clear structure: optimize grasps, verify them, then amortize the result with a diffusion model.

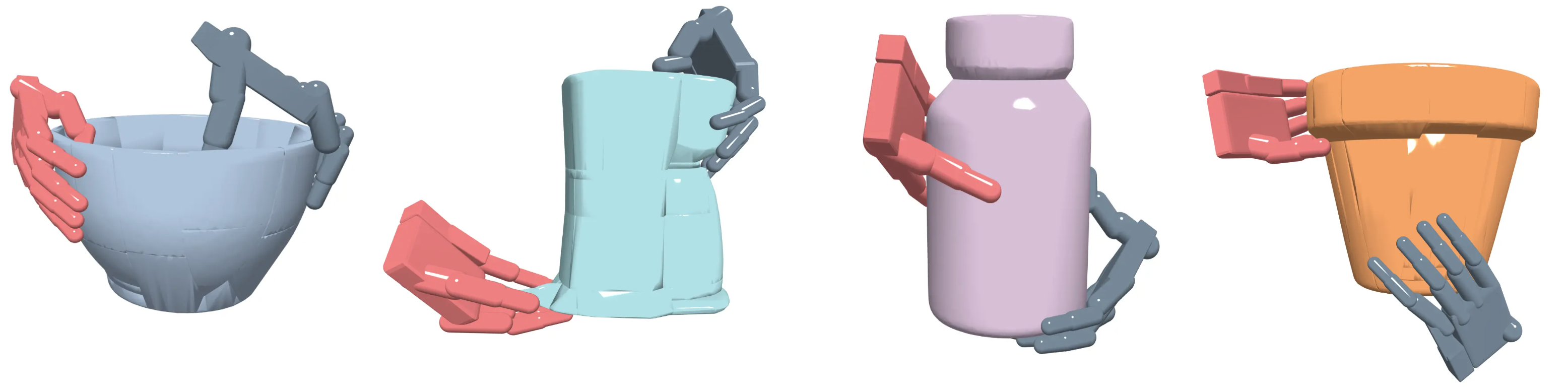

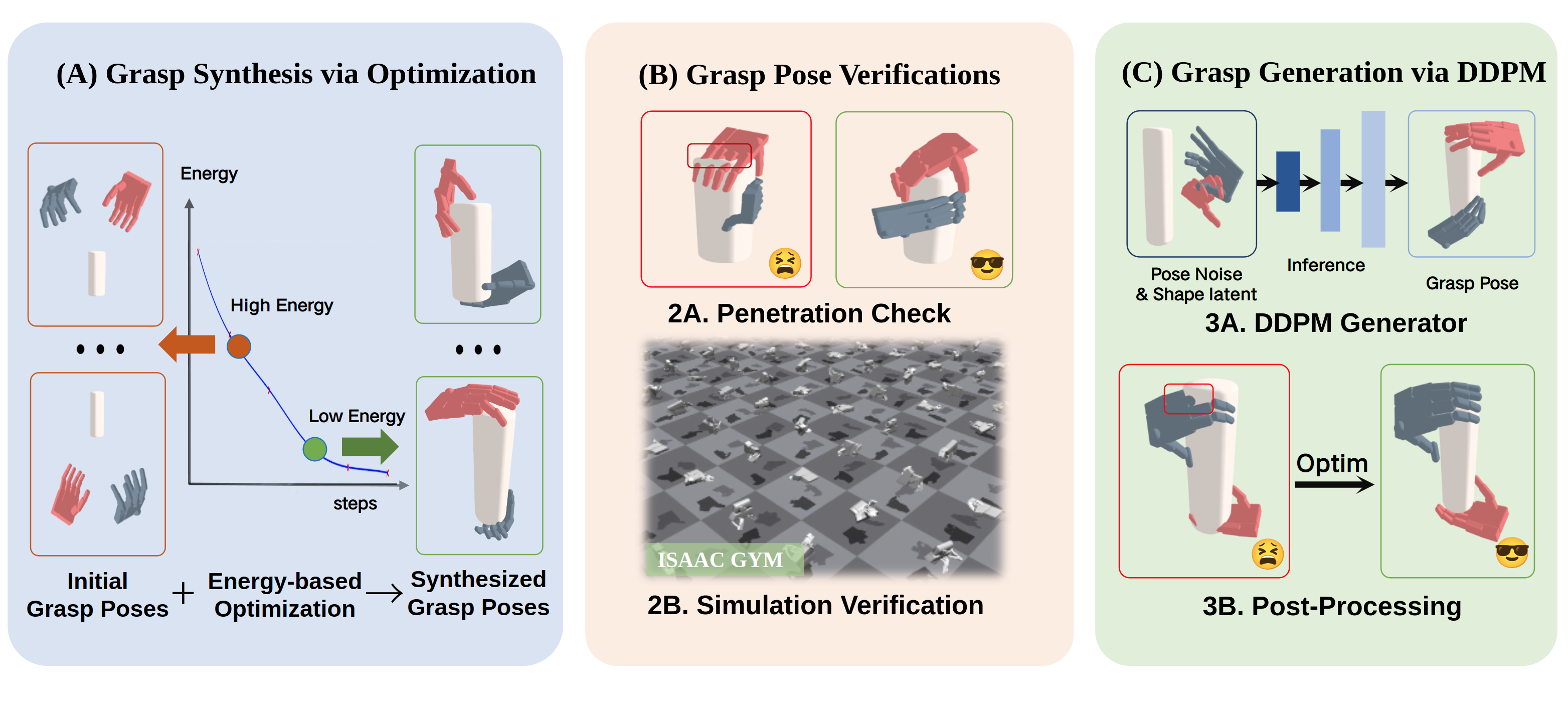

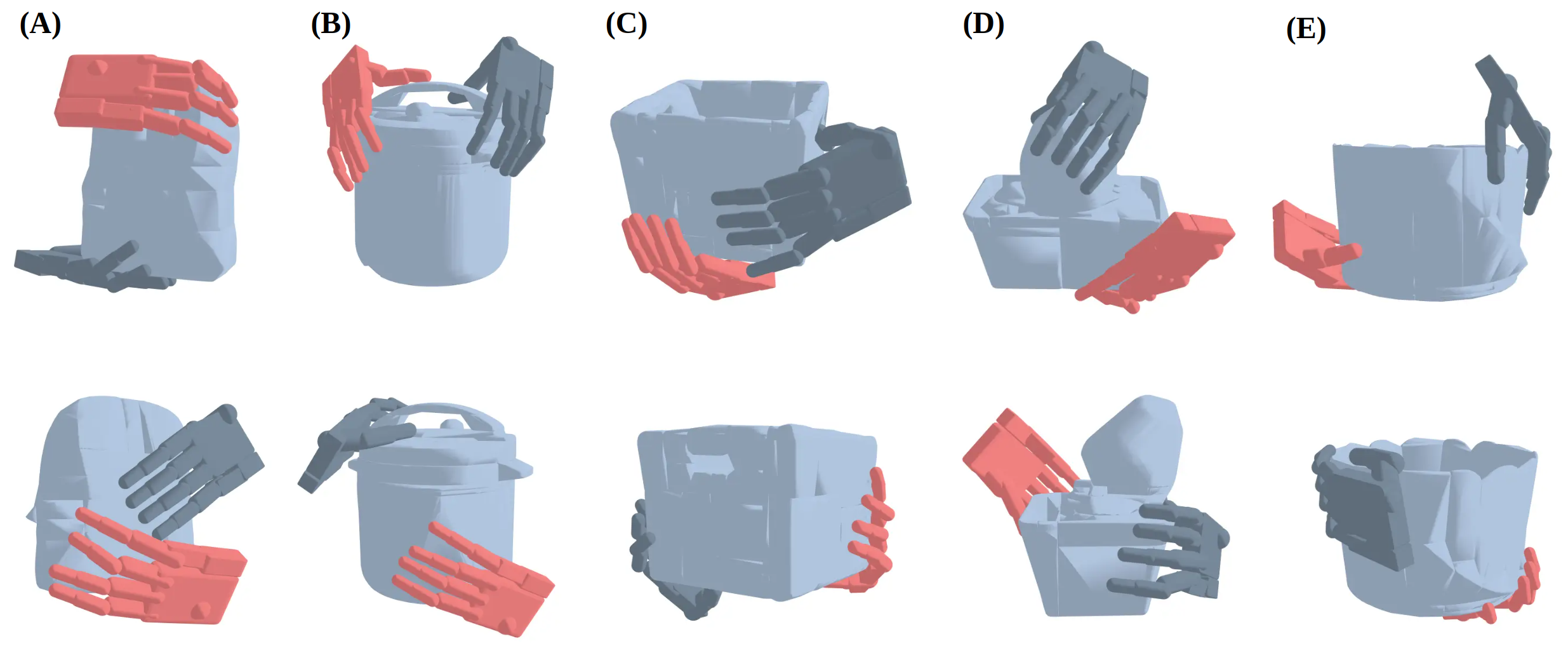

The synthesized grasps cover a wide range of containers and household geometries instead of a single narrow object family.

Verification and dataset

The important design choice is that geometry alone is not accepted as evidence. Every candidate grasp is validated in Isaac Gym with a PD controller, fixed friction settings, randomized joint-object rotations, and a success criterion tied to actual holding performance.

A grasp enters the dataset only if:

- the object stays in hand for 2.0 seconds of simulation time;

- the verification rollout remains stable under changed gravity directions;

- total penetration stays below the threshold used by the paper.

That produces BimanGrasp-Dataset, a collection of more than 150K verified grasps over 900 daily objects. The dataset is useful not just because it is large, but because the labels come from physical verification rather than visual plausibility.

The dataset spans qualitatively different contact modes: rim pinches, wrap grasps, support contacts, opposing grasps, and object-specific asymmetric strategies.

From optimization to fast generation

Once the verified set exists, the paper turns grasp synthesis into a conditional diffusion problem:

where is an object feature extracted from geometry and is the target bimanual grasp. The learning objective is the standard noise-prediction DDPM loss:

The paper still applies a short post-optimization pass after generation, which is a pragmatic choice: the diffusion model is fast, while the optimizer remains the final tool for penetration cleanup.

Main results

Two quantitative results matter most.

| Setting | BimanGrasp | Baseline |

|---|---|---|

| Object density kg/m | 41.02% | 32.87% (Uni2Bim (opt)) |

| Object density kg/m | 54.03% | 45.26% (Uni2Bim (opt)) |

| Object density kg/m | 71.42% | 56.69% (Uni2Bim (opt)) |

| DDPM on unseen objects, kg/m | 54.06% | comparable to optimization |

| DDPM on unseen objects, kg/m | 69.87% | comparable to optimization |

These numbers support a simple claim: bimanual coordination should be modeled jointly, and a physically verified synthesis pipeline can provide enough structure to train a fast generator without collapsing quality.

Why this work matters

BimanGrasp is a good example of a useful research pattern for dexterous manipulation:

- use geometry and mechanics to build a dataset before reaching for large learned policies;

- separate generation from verification so success is testable;

- keep the learning target close to a physically meaningful search procedure.

Venue

Published in IEEE Robotics and Automation Letters (RA-L) and presented at ICRA 2025.

Authors: Yanming Shao, Chenxi Xiao*